Indian Youth brigade with Abhishek Kadyan

Wednesday, March 9, 2011

Wednesday, March 2, 2011

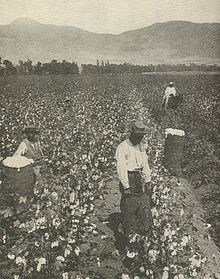

Plantation

A plantation is a large artificially established forest, farm or estate, where crops are grown for sale, often in distant markets rather than for local on-site consumption. The term plantation is informal and not precisely defined.

Crops grown on plantations include fast-growing trees (often conifers), cotton, coffee, tobacco, sugar cane, sisal, some oil seeds (notably oil palms) and rubber trees. Farms that produce alfalfa, Lespedeza, clover, and other forage crops are usually not called plantations. The term "plantation" has usually not included large orchards (except for banana plantations), but does include the planting of trees for lumber. A plantation is always a monoculture over a large area and does not include extensive naturally occurring stands of plants that have economic value. Because of its large size, a plantation takes advantage of economies of scale. Protectionist policies and natural comparative advantage have contributed to determining where plantations have been located.

Among the earliest examples of plantations were the latifundia of the Roman Empire, which produced large quantities of wine and olive oil for export. Plantation agriculture grew rapidly with the increase in international trade and the development of a worldwide economy that followed the expansion of European colonial empires. Like every economic activity, it has changed over time. Earlier forms of plantation agriculture were associated with large disparities of wealth and income, foreign ownership and political influence, and exploitative social systems such as indentured labor and slavery. The history of the environmental, social and economic issues relating to plantation agriculture are covered in articles that focus on those subjects.

Contents[hide] |

Forestry

Industrial plantations

Industrial plantations are established to produce a high volume of wood in a short period of time. Plantations are grown by state forestry authorities (for example, the Forestry Commission in Britain) and/or the paper and wood industries and other private landowners (such as Weyerhaeuser and International Paper in the United States, Asia Pulp & Paper (APP) in Indonesia). Christmas trees are often grown on plantations as well. In southern and southeastern Asia, rubber, oil palm, and more recently teak plantations have replaced the natural forest.

Industrial plantations are actively managed for the commercial production of forest products. Industrial plantations are usually large-scale. Individual blocks are usually even-aged and often consist of just one or two species. These species can be exotic or indigenous. The plants used for the plantation are often genetically improved for desired traits such as growth and resistance to pests and diseases in general and specific traits, for example in the case of timber species, volumic wood production and stem straightness. Forest genetic resources are the basis for genetic improvement. Selected individuals grown in seed orchards are a good source for seeds to develop adequate planting material.

Wood production on a tree plantation is generally higher than that of natural forests. While forests managed for wood production commonly yield between 1 and 3 cubic meters per hectare per year, plantations of fast-growing species commonly yield between 20 and 30 cubic meters or more per hectare annually; a Grand Fir plantation at Craigvinean in Scotland has a growth rate of 34 cubic meters per hectare per year (Aldhous & Low 1974), and Monterey Pine plantations in southern Australia can yield up to 40 cubic meters per hectare per year (Everard & Fourt 1974). In 2000, while plantations accounted for 5% of global forest, it is estimated that they supplied about 35% of the world's roundwood [1].

Growth cycle

- In the first year, the ground is prepared usually by the combination of burning, herbicide spraying, and/or cultivation and then saplings are planted by human crew or by machine. The saplings are usually obtained in bulk from industrial nurseries, which may specialize in selective breeding in order to produce fast growing disease- and pest-resistant strains.

- In the first few years until the canopy closes, the saplings are looked after, and may be dusted or sprayed with fertilizers or pesticides until established.

- After the canopy closes, with the tree crowns touching each other, the plantation is becoming dense and crowded, and tree growth is slowing due to competition. This stage is termed 'pole stage'. When competition becomes too intense (for pine trees, when the live crown is less than a third of the tree's total height), it is time to thin out the section. There are several methods for thinning, but where topography permits, the most popular is 'row-thinning', where every third or fourth or fifth row of trees is removed, usually with a harvester. Many trees are removed, leaving regular clear lanes through the section so that the remaining trees have room to expand again. The removed trees are delimbed, forwarded to the forest road, loaded onto trucks, and sent to a mill. A typical pole stage plantation tree is 7–30 cm in diameter at breast height (dbh). Such trees are sometimes not suitable for timber, but are used as pulp for paper and particleboard, and as chips for oriented strand board.

- As the trees grow and become dense and crowded again, the thinning process is repeated. Depending on growth rate and species, trees at this age may be large enough for timber milling; if not, they are again used as pulp and chips.

- Around year 10-60 the plantation is now mature and (in economic terms) is falling off the back side of its growth curve. That is to say, it is passing the point of maximum wood growth per hectare per year, and so is ready for the final harvest. All remaining trees are felled, delimbed, and taken to be processed.

- The ground is cleared, and the cycle is repeated.

Some plantation trees, such as pines and eucalyptus, can be at high risk of fire damage because their leaf oils and resins are flammable to the point of a tree being explosive under some conditions. Conversely, an afflicted plantation can in some cases be cleared of pest species cheaply through the use of a prescribed burn, which kills all lesser plants but does not significantly harm the mature trees.

Criticism of industrial plantations

In contrast to a naturally regenerated forest, plantations are typically grown as even-aged monocultures, primarily for timber production.

- Plantations are usually near- or total monocultures. That is, the same species of tree is planted across a given area, whereas a natural forest would contain a far more diverse range of tree species.

- Plantations may include tree species that would not naturally occur in the area. They may include unconventional types such as hybrids, and genetically modified trees may be used sometime in the future[citation needed]. Since the primary interest in plantations is to produce wood or pulp, the types of trees found in plantations are those that are best-suited to industrial applications. For example, pine, spruce and eucalyptus are widely planted far beyond their natural range because of their fast growth rate, tolerance of rich or degraded agricultural land and potential to produce large volumes of raw material for industrial use.

- Plantations are always young forests in ecological terms. Typically, trees grown in plantations are harvested after 10 to 60 years, rarely up to 120 years. This means that the forests produced by plantations do not contain the type of growth, soil or wildlife typical of old-growth natural forest ecosystems. Most conspicuous is the absence of decaying dead wood, a crucial component of natural forest ecosystems.

In the 1970s, Brazil began to establish high-yield, intensively managed, short rotation plantations. These types of plantations are sometimes called fast-wood plantations or fiber farms and often managed on a short-rotation basis, as little as 5 to 15 years. They are becoming more widespread in South America, Asia and other areas. The environmental and social impacts of this type of plantation has caused them to become controversial. In Indonesia, for example, large multi-national pulp companies have harvested large areas of natural forest without regard for regeneration. From 1980 to 2000, about 50% of the 1.4 million hectares of pulpwood plantations in Indonesia have been established on what was formerly natural forest land.

The replacement of natural forest with tree plantations has also caused social problems. In some countries, again, notably Indonesia, conversions of natural forest are made with little regard for rights of the local people. Plantations established purely for the production of fiber provide a much narrower range of services than the original natural forest for the local people. India has sought to limit this damage by limiting the amount of land owned by one entity and, as a result, smaller plantations are owned by local farmers who then sell the wood to larger companies. Some large environmental organizations are critical of these high-yield plantations and are running an anti-plantation campaign, notably the Rainforest Action Network and Greenpeace.

Farm or home plantations

Farm or home plantations are typically established for the production of timber and fire wood for home use and sometimes for sale. Management may be less intensive than with Industrial plantations. In time, this type of plantation can become difficult to distinguish from naturally regenerated forest.

Teak and bamboo plantations in India have given good results and an alternative crop solution to farmers of central India, where conventional farming was popular. But due to rising input costs of farming many farmers have done teak and bamboo plantations which require very little water (only during first two years). Teak and bamboo have legal protection from theft. Bamboo, once planted, gives output for 50 years till flowering occurs. Teak requires 20 years to grow to full maturity and fetch returns. Indirectly it also contributes to the positive impact on the climate change problem.

Environmental plantations

These may be established for watershed or soil protection. They are established for erosion control, landslide stabilization and windbreaks. Such plantations are established to foster native species and promote forest regeneration on degraded lands as a tool of environmental restoration.

Ecological impact

Probably the single most important factor a plantation has on the local environment is the site where the plantation is established. If natural forest is cleared for a planted forest then a reduction in biodiversity and loss of habitat will likely result. In some cases, their establishment may involve draining wetlands to replace mixed hardwoods that formerly predominated, with pine species. If a plantation is established on abandoned agricultural land, or highly degraded land, it can result in an increase in both habitat and biodiversity. A planted forest can be profitably established on lands that will not support agriculture or suffer from lack of natural regeneration.

The tree species used in a plantation is also an important factor. Where non-native varieties or species are grown, few of the native fauna are adapted to exploit these and further biodiversity loss occurs. However, even non-native tree species may serve as corridors for wildlife and act as a buffer for native forest, reducing edge effect.

Once a plantation is established, how it is managed becomes the important environmental factor. The single most important factor of management is the rotation period. Plantations harvested on longer rotation periods (30 years or more) can provide similar benefits to a naturally regenerated forest managed for wood production, on a similar rotation. This is especially true if native species are used. In the case of exotic species, the habitat can be improved significantly if the impact is mitigated by measures such as leaving blocks of native species in the plantation, or retaining corridors of natural forest. In Brazil, similar measures are required by government regulations.

Plantations and natural forest loss

Many forestry experts claim that the establishment of plantations will reduce or eliminate the need to exploit natural forest for wood production. In principle this is true because due to the high productivity of plantations less land is needed. Many point to the example of New Zealand, where 19% of the forest area provides 99% of the supply of industrial round wood. It has been estimated that the worlds needs for fiber could be met by just 5% of the world forest (Sedjo&Botkin1997). However in practice, plantations are replacing natural forest, for example in Indonesia. According to the FAO, about 7% of the natural closed forest being lost in the tropics is land being converted to plantations. The remaining 93% of the loss is land being converted to agriculture and other uses. Worldwide, an estimated 15% of plantations in tropical countries are established on closed canopy natural forest.

In the Kyoto Protocol, there are proposals encouraging the use of plantations to reduce carbon dioxide levels (though this idea is being challenged by some groups on the grounds that the sequestered CO2 is eventually released after harvest).

Other types of plantation

Crops may be called plantation crops because of their association with a specific type of farming economy. Most of these involve a large landowner, raising crops with economic value rather than for subsistence, with a number of employees carrying out the work. Often it referred to crops newly introduced to a region. In past times it has been associated with slavery, indentured labour, and other economic models of high inequity. However, arable and dairy farming are both usually (but not always) excluded from such definitions. A comparable economic structure in antiquity was the latifundia that produced commercial quantities of olive oil or wine, for export. One plantation crop is bananas and there are others as well.

High value food crops

Plantings of a number of trees or shrubs grown for food or beverage, including tea, coffee, and cacao are generally called plantations. Some spice and high value crops grown from permanent perennial stock, such as black pepper may also be so called. When the holding belongs to a single individual, that person may be called a planter.

Sugar

Sugar plantations were highly valued in the Caribbean by the British and French colonists in the 19th and 20th centuries and the use of sugar in Europe rose during this period. Sugarcane is still an important crop in Cuba. Sugar plantations also arose in countries such as Barbados and Cuba because of the natural endowments that they had. These natural endowments included soil that was conducive to growing sugar and a high marginal product of labor realized through the increasing number of slaves.

Rubber

Plantings of para rubber, the tree Hevea brasiliensis, are usually called plantations.

Orchards

Fruit orchards are sometimes considered to be plantations.

Arable crops

These include tobacco, sugarcane, pineapple, and cotton, especially in historical usage.

Before the rise of cotton in the American South, indigo and rice were also sometimes called plantation crops.

Fishing plantations in Newfoundland and Labrador

When Newfoundland was colonized by England in 1610, the original colonists were called "Planters" and their fishing rooms were known as "fishing plantations". These terms were used well into the 20th century.

The following three plantations are maintained by the Government of Newfoundland and Labrador as provincial heritage sites:

- Sea-Forest Plantation was a 17th-century fishing plantation established at Cuper's Cove (present-day Cupids) under a royal charter issued by King James I.

- Mockbeggar Plantation is an 18th-century fishing plantation at Bonavista.

- Pool Plantation a 17th-century fishing plantation maintained by Sir David Kirke and his heirs at Ferryland. The plantation was destroyed by French invaders in 1696.

Other fishing plantations:

- Bristol's Hope Plantation, a 17th-century fishing plantation established at Harbour Grace, created by the Bristol Society of Merchant-Adventurers.

- Benger Plantation, an 18th-century fishing plantation maintained by James Benger and his heirs at Ferryland. It was built on the site of Georgia plantation.

- Piggeon's Plantation, an 18th-century fishing plantation maintained by Ellias Piggeon at Ferryland.

Slavery, para-slavery and plantations

African slave labor extracted from forcibly transported Africans was used extensively to work on early plantations (such as cotton and sugar plantations) in the United States, throughout the Caribbean, the Americas and in European-occupied areas of Africa. Several notable historians and economists such as Eric Williams, Walter Rodney and Karl Marx contend that the global capitalist economy was largely founded on the creation and produce of thousands of slave labour camps based in colonial plantations exploiting tens of millions of abducted Africans.

In modern times, the low wages typically paid to plantation workers are the basis of plantation profitability in some areas with minimal employee-protection legislation. Sugarcane plantations in the Caribbean and Brazil, worked by slave labour, were also examples of the plantation system.

In more recent times, overt slavery has been replaced by "para-slavery" or slavery-in-kind, including the sharecropping system. At its most extreme, workers are in "debt bondage": they must work to pay off a debt at such punitive interest rates that it may never be paid off. Others work unreasonably long hours and are paid subsistence wages that (in practice) may only be spent in the company store.

In Brazil, a sugarcane plantation was termed an engenho ("engine"), and the 17th-century English usage for organized colonial production was "factory". Such colonial social and economic structures are discussed at Plantation economy. Sugar workers on plantations in Cuba and elsewhere in the Caribbean lived in company towns known as Bateys.

Plantations in the antebellum American South

In the American South, antebellum plantations were centered on a "plantation house", the residence of the owner, where important business was conducted. Slavery and plantations had different characteristics in different regions of the South. As the Upper South of the Chesapeake Bay Colony developed first, historians of the antebellum South defined planters as those who held 20 or more slaves. Major planters held many more, especially in the Deep South as it developed. [1] The majority of slaveholders held 10 or fewer slaves, often just a few to labor domestically. By the late 18th century, most planters in the Upper South had switched from exclusive tobacco cultivation to mixed crop production, both because tobacco had exhausted the soil and because of changing markets. The shift away from tobacco meant they had slaves in excess of the number needed for labor, and they began to sell them in the internal slave trade.

There was a variety of domestic architecture on plantations. The largest and wealthiest planter families, for instance, those with estates fronting on the James River in Virginia, constructed mansions in brick and Georgian style, e.g. Shirley Plantation. Common or smaller planters in the late 18th and 19th century had more modest wood frame buildings, such as Southall Plantation in Charles City County.

In the Low Country of South Carolina, by contrast, even before the American Revolution, planters holding large rice and cotton plantations in South Carolina typically owned hundreds of slaves. In Charleston and Savannah, the elite also held numerous slaves to work as household servants. The 19th-century development of the Deep South for cotton cultivation depended on large plantations with much more acreage than was typical of the Chesapeake Bay area, and for labor, planters held hundreds of slaves.

Until December 1865 slavery was legal in parts of the United States. Most slaves were employed in agriculture, and "planter" was a term commonly used to describe a farmer with many slaves.

The term "planter" has no universally accepted definition but academic historians have defined it to identify the elite class, "a landowning farmer of substantial means."[2] In the "Black Belt" counties of Alabama and Mississippi, the terms “"planter" and "farmer" were often synonymous.[3] Robert Fogel and Stanley Engerman define large planters as owning over 50 slaves, and medium planters as owning between 16 and 50 slaves.[4] In his study of Black Belt counties in Alabama, Jonathan Wiener defines planters by ownership of real property, rather than of slaves. A planter, for Wiener, owned at least 10,000 dollars worth of real estate in 1850 and 32,000 dollars' worth in 1860, equivalent to about the top 8 percent of landowners.[5] In his study of southwest Georgia, Lee Formwalt also defines planters in size of land holdings rather than slaves. Formwalt’s planters are in the top 4.5 percent of landowners, translating into real estate worth six thousand dollars or more in 1850, 24,000 dollars or more in 1860, and eleven thousand dollars or more in 1870.[6] In his study of Harrison County, Texas, Randolph B. Campbell classifies large planters as owners of 20 slaves, and small planters as owners of between ten and 19 slaves.[7] In Chicot and Phillips Counties, Arkansas, Carl H. Moneyhon defines large planters as owners of twenty or more slaves, and six hundred or more acres.[8]

Blood donation

A blood donation occurs when a person voluntarily has blood drawn and used for transfusions or made into medications by a process called fractionation.

In the developed world, most blood donors are unpaid volunteers who give blood for a community supply. In poorer countries, established supplies are limited and donors usually give blood when family or friends need a transfusion. Many donors donate as an act of charity, but some are paid and in some cases there are incentives other than money such as paid time off from work. A donor can also have blood drawn for their own future use. Donating is relatively safe, but some donors have bruising where the needle is inserted or may feel faint.

Potential donors are evaluated for anything that might make their blood unsafe to use. The screening includes testing for diseases that can be transmitted by a blood transfusion, including HIV and viral hepatitis. The donor is also asked about medical history and given a short physical examination to make sure that the donation is not hazardous to his or her health. How often a donor can give varies from days to months based on what he or she donates and the laws of the country where the donation takes place. For example, in the United States donors must wait 8 weeks (56 days) between whole blood donations but only three days between plateletpheresis donations.[1]

The amount of blood drawn and the methods vary. The collection can be done manually or with automated equipment that only takes specific portions of the blood. Most of the components of blood used for transfusions have a short shelf life, and maintaining a constant supply is a persistent problem.

Contents[hide] |

[edit] Types of donation

Blood donations are divided into groups based on who will receive the collected blood.[2] An allogeneic (also called homologous) donation is when a donor gives blood for storage at a blood bank for transfusion to an unknown recipient. A directed donation is when a person, often a family member, donates blood for transfusion to a specific individual.[3] Directed donations are relatively rare.[4] A replacement donor donation is a hybrid of the two and is common in developing countries such as Ghana.[5] In this case, a friend or family member of the recipient donates blood to replace the stored blood used in a transfusion, ensuring a consistent supply. When a person has blood stored that will be transfused back to the donor at a later date, usually after surgery, that is called an autologous donation.[6] Blood that is used to make medications can be made from allogeneic donations or from donations exclusively used for manufacturing.[7]

The actual process varies according to the laws of the country, and recommendations to donors vary according to the collecting organization.[8][9][10] The World Health Organization gives recommendations for blood donation policies,[11] but in developing countries many of these are not followed. For example, the recommended testing requires laboratory facilities, trained staff, and specialized reagents, all of which may not be available or too expensive in developing countries.[12]

An event where donors come to give allogeneic blood is sometimes called a blood drive or a blood donor session. These can occur at a blood bank but they are often set up at a location in the community such as a shopping center, workplace, school, or house of worship.[13]

[edit] Screening

Donors are typically required to give consent for the process and this requirement means that minors cannot donate without permission from a parent or guardian.[14] In some countries, answers are associated with the donor's blood, but not name, to provide anonymity; in others, such as the United States, names are kept to create lists of ineligible donors.[15] If a potential donor does not meet these criteria, they are deferred. This term is used because many donors who are ineligible may be allowed to donate later.

The donor's race or ethnic background is sometimes important since certain blood types, especially rare ones, are more common in certain ethnic groups.[16] Historically, donors were segregated or excluded on race, religion, or ethnicity, but this is no longer a standard practice.[17]

[edit] Recipient safety

Donors are screened for health risks that might make the donation unsafe for the recipient. Some of these restrictions are controversial, such as restricting donations from men who have sex with men for HIV risk.[18] Autologous donors are not always screened for recipient safety problems since the donor is the only person who will receive the blood.[19] Donors are also asked about medications such as dutasteride since they can be dangerous to a pregnant woman receiving the blood.[20]

Donors are examined for signs and symptoms of diseases that can be transmitted in a blood transfusion, such as HIV, malaria, and viral hepatitis. Screening may extend to questions about risk factors for various diseases, such as travel to countries at risk for malaria or variant Creutzfeldt-Jakob Disease (vCJD). These questions vary from country to country. For example, while blood centers in Québec, Poland, and many other places defer donors who lived in the United Kingdom for risk of vCJD,[21][22] donors in the United Kingdom are only restricted for vCJD risk if they have had a blood transfusion in the United Kingdom.[23]

[edit] Donor safety

The donor is also examined and asked specific questions about their medical history to make sure that donating blood is not hazardous to their health. The donor's hematocrit or hemoglobin level is tested to make sure that the loss of blood will not make them anemic, and this check is the most common reason that a donor is ineligible.[24] Pulse, blood pressure, and body temperature are also evaluated. Elderly donors are sometimes also deferred on age alone because of health concerns.[25] The safety of donating blood during pregnancy has not been studied thoroughly and pregnant women are usually deferred.[26]

[edit] Blood testing

The donor's blood type must be determined if the blood will be used for transfusions. The collecting agency usually identifies whether the blood is type A, B, AB, or O and the donor's Rh (D) type and will screen for antibodies to less common antigens. More testing, including a crossmatch, is usually done before a transfusion. Group O is often cited as the "universal donor"[27] but this only refers to red cell transfusions. For plasma transfusions the system is reversed and AB is the universal donor type.[28]

Most blood is tested for diseases, including some STDs.[29] The tests used are high-sensitivity screening tests and no actual diagnosis is made. Some of the test results are later found to be false positives using more specific testing.[30] False negatives are rare, but donors are discouraged from using blood donation for the purpose of anonymous STD screening because a false negative could mean a contaminated unit. The blood is usually discarded if these tests are positive, but there are some exceptions, such as autologous donations. The donor is generally notified of the test result.[31]

Donated blood is tested by many methods, but the core tests recommended by the World Health Organization are these four:

- Hepatitis B Surface Antigen

- Antibody to Hepatitis C

- Antibody to HIV, usually subtypes 1 and 2

- Serologic test for Syphilis

The WHO reported in 2006 that 56 out of 124 countries surveyed did not use these basic tests on all blood donations.[12]

A variety of other tests for transfusion transmitted infections are often used based on local requirements. Additional testing is expensive, and in some cases the tests are not implemented because of the cost.[32] These additional tests include other infectious diseases such as West Nile Virus.[33] Sometimes multiple tests are used for a single disease to cover the limitations of each test. For example, the HIV antibody test will not detect a recently infected donor, so some blood banks use a p24 antigen or HIV nucleic acid test in addition to the basic antibody test to detect infected donors during that period. Cytomegalovirus is a special case in donor testing in that many donors will test positive for it.[34] The virus is not a hazard to a healthy recipient, but it can harm infants[35] and other recipients with weak immune systems.[34]

[edit] Obtaining the blood

There are two main methods of obtaining blood from a donor. The most frequent is simply to take the blood from a vein as whole blood. This blood is typically separated into parts, usually red blood cells and plasma, since most recipients need only a specific component for transfusions. A typical donation is 450 milliliters (or approximately one US pint)[36] of whole blood, though 500 millileter donations are also common. Historically, blood donors in the People's Republic of China would donate only 200 milliliters, though larger 300 and 400 milliliter donations have become more common.[37]

The other method is to draw blood from the donor, separate it using a centrifuge or a filter, store the desired part, and return the rest to the donor. This process is called apheresis, and it is often done with a machine specifically designed for this purpose. This process is especially common for plasma and platelets.

For direct transfusions a vein can be used but the blood may be taken from an artery instead.[38] In this case, the blood is not stored, but is pumped directly from the donor into the recipient. This was an early method for blood transfusion and is rarely used in modern practice.[39] It was phased out during World War II because of problems with logistics, and doctors returning from treating wounded soldiers set up banks for stored blood when they returned to civilian life.[40]

[edit] Site preparation and drawing blood

The blood is drawn from a large arm vein close to the skin, usually the median cubital vein on the inside of the elbow. The skin over the blood vessel is cleaned with an antiseptic such as iodine or chlorhexidine[41] to prevent skin bacteria from contaminating the collected blood[41] and also to prevent infections where the needle pierced the donor's skin.[42]

A large[43] needle (16 to 17 gauge) is used to minimize shearing forces that may physically damage red blood cells as they flow through the needle.[44] A tourniquet is sometimes wrapped around the upper arm to increase the pressure of the blood in the arm veins and speed up the process. The donor may also be prompted to hold an object and squeeze it repeatedly to increase the blood flow through the vein.

[edit] Whole blood

The most common method is collecting the blood from the donor's vein into a container. The amount of blood drawn varies from 200 milliliters to 550 milliliters depending on the country, but 450-500 milliliters is typical.[34] The blood is usually stored in a flexible plastic bag that also contains sodium citrate, phosphate, dextrose, and sometimes adenine. This combination keeps the blood from clotting and preserves it during storage.[45] Other chemicals are sometimes added during processing.

The plasma from whole blood can be used to make plasma for transfusions or it can also be processed into other medications using a process called fractionation. This was a development of the dried plasma used to treat the wounded during World War II and variants on the process are still used to make a variety of other medications.[46] [47]

[edit] Apheresis

Apheresis is a blood donation method where the blood is passed through an apparatus that separates out one particular constituent and returns the remainder to the donor. Usually the component returned is the red blood cells, the portion of the blood that takes the longest to replace. Using this method an individual can donate plasma or platelets much more frequently than they can safely donate whole blood.[48] These can be combined, with a donor giving both plasma and platelets in the same donation.

Platelets can also be separated from whole blood, but they must be pooled from multiple donations. From three to ten units of whole blood are required for a therapeutic dose.[49] Plateletpheresis provides at least one full dose from each donation.

Plasmapheresis is frequently used to collect source plasma that is used for manufacturing into medications much like the plasma from whole blood. Plasma collected at the same time as plateletpheresis is sometimes called concurrent plasma.

Apheresis is also used to collect more red blood cells than usual in a single donation and to collect white blood cells for transfusion.[50][51]

[edit] Recovery and time between donations

Donors are usually kept at the donation site for 10–15 minutes after donating since most adverse reactions take place during or immediately after the donation.[52] Blood centers typically provide light refreshments or a lunch allowance to help the donor recover.[53] The needle site is covered with a bandage and the donor is directed to keep the bandage on for several hours.[36]

Donated plasma is replaced after 2–3 days.[54] Red blood cells are replaced by bone marrow into the circulatory system at a slower rate, on average 36 days in healthy adult males. In one study, the range was 20 to 59 days for recovery.[55] These replacement rates are the basis of how frequently a donor can give blood.

Plasmapheresis and plateletpheresis donors can give much more frequently because they do not lose significant amounts of red cells. The exact rate of how often a donor can donate differs from country to country. For example, plasmapheresis donors in the United States are allowed to donate large volumes twice a week and could nominally give 83 liters (about 22 gallons) in a year, whereas the same donor in Japan may only donate every other week and could only donate about 16 liters (about 4 gallons) in a year.[56] Red blood cells are the limiting step for whole blood donations, and the frequency of donation varies widely. In Hong Kong it is from three to six months,[57] in Australia it is twelve weeks,[58] in Canada and the United States it is eight weeks [59] and in the UK it is usually sixteen weeks but can be as little as twelve providing donation is not more frequently than three times per year.[60]

[edit] Complications

Donors are screened for health problems that would put them at risk for serious complications from donating. First-time donors, teenagers, and women are at a higher risk of a reaction.[61][62] One study showed that 2% of donors had an adverse reaction to donation.[63] Most of these reactions are minor. A study of 194,000 donations found only one donor with long-term complications.[64] In the United States, a blood bank is required to report any death that might possibly be linked to a blood donation. An analysis of all reports from October 2008 to September 2009 evaluated six events and found that five of the deaths were clearly unrelated to donation, and in the remaining case they found no evidence that the donation was the cause of death.[65]

Hypovolemic reactions can occur because of a rapid change in blood pressure. Fainting is generally the worst problem encountered.[66]

The process has similar risks to other forms of phlebotomy. Bruising of the arm from the needle insertion is the most common concern. One study found that less than 1% of donors had this problem.[67] A number of less common complications of blood donation are known to occur. These include arterial puncture, delayed bleeding, nerve irritation, nerve injury, tendon injury, thrombophlebitis, and allergic reactions.[68]

Donors sometimes have adverse reactions to the sodium citrate used in apheresis collection procedures to keep the blood from clotting. Since the anticoagulant is returned to the donor along with blood components that are not being collected, it can bind the calcium in the donor's blood and cause hypocalcemia.[69] These reactions tend to cause tingling in the lips, but may cause convulsions or more serious problems. Donors are sometimes given calcium supplements during the donation to prevent these side effects.[70]

In apheresis procedures, the red blood cells are often returned. If this is done manually and the donor receives the blood from a different person, a transfusion reaction can take place. Manual apheresis is extremely rare in the developed world because of this risk and automated procedures are as safe as whole blood donations.[71]

The final risk to blood donors is from equipment that has not been properly sterilized. In most cases, the equipment that comes in direct contact with blood is discarded after use.[72] Re-used equipment was a significant problem in China in the 1990s, and up to 250,000 blood plasma donors may have been exposed to HIV from shared equipment.[73][74]

[edit] Storage, supply and demand

The collected blood is usually stored as separate components, and some of these have short shelf lives. There are no storage solutions to keep platelets for extended periods of time, though some are being studied as of 2008.[75] The longest shelf life used for platelets is seven days.[76] Red blood cells, the most frequently used component, have a shelf life of 35–42 days at refrigerated temperatures.[77][78] This can be extended by freezing the blood with a mixture of glycerol but this process is expensive, rarely done, and requires an extremely cold freezer for storage.[34] Plasma can be stored frozen for an extended period of time and is typically given an expiration date of one year and maintaining a supply is less of a problem.[79]

The limited storage time means that it is difficult to have a stockpile of blood to prepare for a disaster. The subject was discussed at length after the September 11th attacks in the United States, and the consensus was that collecting during a disaster was impractical and that efforts should be focused on maintaining an adequate supply at all times.[80] Blood centers in the U.S. often have difficulty maintaining even a three day supply for routine transfusion demands.[81]

The World Health Organization recognizes World Blood Donor Day on 14th June each year to promote blood donation. This is the birthday of Karl Landsteiner, the scientist who discovered the ABO blood group system.[82] As of 2008, the WHO estimated that more than 81 million units of blood were being collected annually.[83]

[edit] Benefits and incentives

The World Health Organization set a goal in 1997 for all blood donations to come from unpaid volunteer donors, but as of 2006, only 49 of 124 countries surveyed had established this as a standard.[12] Some Plasmapheresis donors in the United States are still paid for donations.[84] A few countries rely on paid donors to maintain an adequate supply.[85] Some countries, such as Tanzania, have made great strides in moving towards this standard, with 20 percent of donors in 2005 being unpaid volunteers and 80 percent in 2007, but 68 of 124 countries surveyed by WHO had made little or no progress.[5] In some countries, for example Brazil, it is illegal to receive any compensation, monetary or otherwise, for the donation of blood or other human tissues.[86]

In patients prone to iron overload, blood donation prevents the accumulation of toxic quantities.[87] Blood banks in the United States may be required to label the blood if it is from a therapeutic donor, so some do not accept donations from donors with any blood disease.[88] Others, such as the Australian Red Cross Blood Service, accept blood from donors with hemochromatosis. It is a genetic disorder that does not affect the safety of the blood.[89] Donating blood may reduce the risk of heart disease for men, but the link has not been firmly established.[90]

In Italy, blood donors receive the donation day as a paid holiday from work.[91] Other incentives are sometimes added by employers, usually time off for the purposes of donating.[92] Blood centers will also sometimes add incentives such as assurances that donors would have priority during shortages, free T-shirts or other small trinkets (e.g., first aid kits, windshield scrapers, pens, etc.), or other programs such as prize drawings for donors and rewards for organizers of successful drives.[93] Most allogeneic blood donors donate as an act of charity and do not expect to receive any direct benefit from the donation.[94]

The sociologist Richard Titmuss, in his 1970 book The Gift Relationship: From Human Blood to Social Policy, compared the merits of the commercial and non-commercial blood donation systems of the USA and the UK. The book became a bestseller in the USA, resulting in legislation to regulate the private market in blood.[95] The book is still referenced in modern debates about turning blood into a commodity.[96] The book was republished in 1997 and the same ideas and principles are applied to analogous donation programs, such as organ donation and sperm donation.[97]